Octoparse is a web scraping tool easy to use for both coders and non-coders and popular for eCommerce data scraping. It can scrape web data at a large scale (up to millions) and store it in structured files like Excel, CSV, JSON for download. Octoparse offers a free plan for users and trial for paid sub. Features loved by our users. ParseHub is a free web scraping tool. Turn any site into a spreadsheet or API. As easy as clicking on the data you want to extract.

Puppeteer is one of the best web scraping tools you can use as a JavaScript developer. It is a browser automation tool and provides a high-level API for controlling Chrome. Puppeteer was developed by Google and meant for only the Chrome browser and other Chromium browsers. Octoparse is a web scraping tool easy to use for both coders and non-coders and popular for eCommerce data scraping. It can scrape web data at a large scale (up to millions) and store it in structured files like Excel, CSV, JSON for download. Octoparse offers a free plan for users and trial for paid sub. Features loved by our users.

Wednesday, November 25, 2020Table of Contents

This article gives you an idea of what web scraping tool you should use to scrape from Amazon. The list includes small-scale extension tools and multi-functional web scraping softwares and they are compared in three dimensions:

- the degree of automation

- how friendly the user interface is

- how much can be used freely

Browser Extensions

The key to an extension is easy to reach. You can get the idea of web scraping rapidly. With rather basic functions, these options are fit for casual scraping or small business in need of information in simple structure and small amounts.

Data Miner

Data miner is an extension tool that works on Google Chrome and Microsoft Edge. It helps you scrape data from web pages into a CSV file or Excel spreadsheet. A number of custom recipes are available for scraping amazon data. If those offered are exactly what you need, this could be a handy tool for you to scrape from Amazon within a few clicks.

Data scraped by Data Miner

Data Miner has a step-by-step friendly interface and basic functions in web scraping. It’s more recommendable for small business or casual use.

The Firefox Browser blocks most trackers automatically, so there’s no need to dig into your security settings. Firefox is for everyone Available in over 90 languages, and compatible with Windows, Mac and Linux machines, Firefox works no matter what you’re using or where you are. Quantum is Mozilla's project to build the next-generation web engine for Firefox users, building on the Gecko engine as a solid foundation. Quantum will leverage the fearless concurrency of Rust and high-performance components of Servo to bring more parallelization and GPU offloading to Firefox. Servo is written in Rust, and shares code with Mozilla Firefox and the wider Rust ecosystem. Since its creation in 2012, Servo has contributed to W3C/WHATWG web standards by reporting specification issues and submitting new cross-browser automated tests, and core team members have co-edited new standards that have been adopted by other browsers. Firefox servo. Servo is a modern, high-performance browser engine being developed for application and embedded use. These pre-built nightly snapshots allow developers to try Servo and report issues without building Servo locally. Please don’t log into your bank with Servo just yet! Servo is a prototype web browser engine written in the Rust language. It is currently developed on 64-bit macOS, 64-bit Linux, 64-bit Windows, and Android. Servo welcomes contribution from everyone. See CONTRIBUTING.md and HACKINGQUICKSTART.md for help getting started.

There is a page limit (500/month) for the free plan with Data Miner. If you need to scrape more, professional and other paid plans are available.

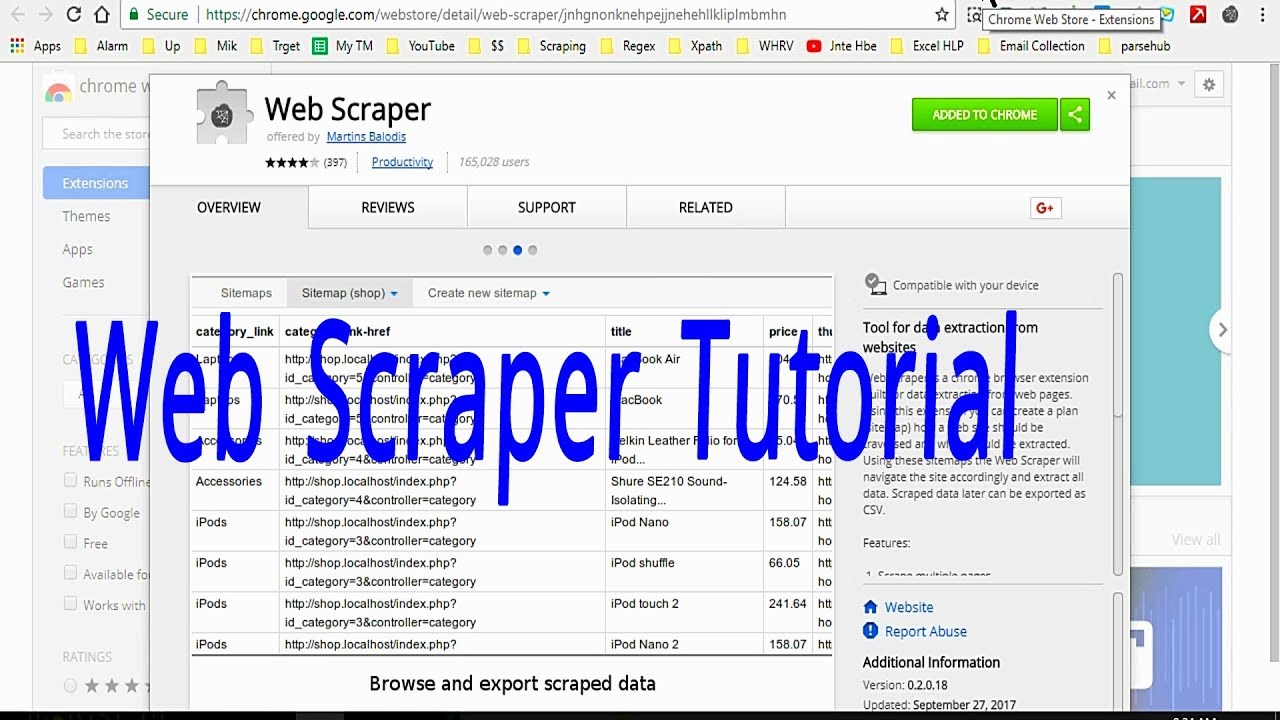

Web Scraper

Web Scraper is an extension tool with a point and click interface integrated in the developer tool. Without certain templates for e-commerce or Amazon scraping, you have to build your own crawler by selecting the listing information you want on the web page.

UI integrated in the developer tool

Web Scrape Tool Extension

Web scraper is equipped with functions (available for paid plan) such as cloud extraction, scheduled scraping, IP rotation, API access. Thus it is capable of more frequent scraping and scraping of a larger volume of information.

Scraper Parsers

Scraper Parsers is a browser extension tool to extract unstructured data and visualize without code. Data extracted can be viewed on the site or downloaded in various forms (XLSX, XLS, XML, CSV). With data extracted, numbers can be displayed in charts accordingly.

Small draggable Panel

The UI of Parsers is a panel you can drag around and select by clicks on the browser and it also supports scheduled scraping. However it seems not stable enough and easily gets stuck. For a visitor, the limit of use is 600 pages per site. You can get 590 more if you sign up.

Amazon Scraper - Trial Version

Amazon scraper is approachable on Chrome’s extension store. It can help scrape price, shipping cost, product header, product information, product images, ASIN from the Amazon search page.

Right-click and scrape

Go to Amazon website and search. When you are on the search page with results you want to scrape from, right click and choose the 'Scrap Asin From This Page' option. Information will be extracted and save it as a CSV file.

This trial version can only download 2 pages of any search query. You need to buy the full version to download unlimited pages and get 1 year free support.

Scraping Software

If you need to scrape from Amazon regularly, you may find some annoying problems that prevent you from reaching the data - IP ban, captcha, login wall, pagination, data in different structures etc. In order to solve these problems, you need a more powerful tool.

Octoparse

Octoparse is a free for life web scraping tool. It helps users to quickly scrape web data without coding. Compared with others, the highlight of this product is its graphic, intuitive UI design. Worth mentioning, its auto-detection function can save your efforts of perplexedly clicking around with messed up data results.

Besides auto-detection, amazon templates are even more convenient. Using templates, you can obtain the product list information as well as detail page information on Amazon. You can also create a more customized crawler by yourself under the advanced mode.

Plenty of templates available for use on Octoparse

There is no limit for the amount of data scraped even with a free plan as long as you keep your data within 10,000 rows per task.

Amazon data scraped using Octoparse

Powerful functions such as cloud service, scheduled automatic scraping, IP rotation (to prevent IP ban) are offered in a paid plan. If you want to monitor stock numbers, prices and other information about an array of shops/products at a regular basis, they are definitely helpful.

ScrapeStorm

ScrapeStorm is an AI-powered visual web scraping tool. Its smart mode works similar to the auto-detection in Octoparse, intelligently identifying the data with little manual operation required. So you just need to click and enter the URL of the amazon page you want to scrape from.

Its Pre Login function helps you scrape URLs that require login to view content. Generally speaking, the UI design of the app is like a browser and comfortable to use.

Data scraped using ScrapeStorm

ScrapeStorm offers a free quota of 100 rows of data per day and one concurrent run is allowed. The value of data comes as you have enough of them for analysis, so you should think of upgrading your service if you choose this tool. Upgrade to the professional so that you can get 10,000 rows per day.

ParseHub

ParseHub is another free web scraper available for direct download. As most of the scraping tools above, it supports crawler building in a click-and-select way and export of data into structured spreadsheets.

For Amazon scrapers, Parsehub doesn’t support auto-detection or offer any Amazon templates, however, if you have prior experience using a scraping tool to build customized crawlers, you can take a shot on this.

Build your crawler on Parsehub

You can save images and files to DropBox, run with IP rotation and scheduling if you start from a standard plan. Free plan users will get 200 pages per run. Don’t forget to backup your data (14-day data retention).

Something More than Tools

Tools are created for convenience use. They make complicated operations possible through a few clicks on a bunch of buttons.

However, it is also common for users to counter unexpected errors because the situation is ever-changing on different sites. You can step a little bit deeper to rescue yourself from such a dilemma - learna bit about htmland Xpath. Not so far to become a coder, just a few steps to know the tool better.

Author: Cici

Sometimes the data you need is available online, but not through a dedicated REST API. Luckily for JavaScript developers, there are a variety of tools available in Node.js for scraping and parsing data directly from websites to use in your projects and applications.

Let's walk through 4 of these libraries to see how they work and how they compare to each other.

Make sure you have up to date versions of Node.js (at least 12.0.0) and npm installed on your machine. Run the terminal command in the directory where you want your code to live:

The UPS Rate guide provides pricing information for domestic, export, and import shipments. You can determine which rate applies to your shipping needs. The UPS Store mailbox services give you a real street address. A PO Box doesn't offer additional services. The UPS Store mailbox services always come with professional help from our team. A PO Box doesn't tell you when certain deliveries arrive. To get an exact price for a UPS mailbox, you’ll need to use the UPS store locator to find and contact a location in your area. However, the following figures can be used as a general guideline: Small Box (Individuals) $10-$30/month. Medium Box (Individuals or Small Business) $20-$40/month. Large Box (Small to Medium Businesses) $30-$50/month. UPS Next Day Air® Early A.M.® UPS Next Day Air® UPS Next Day Air Saver® UPS 2nd Day Air A.M.® UPS 2nd Day Air® UPS 3 Day Select® UPS Ground. Note: The actual rate for UPS Ground may be different than the rate reflected in this online calculator. Visit your local The UPS Store to determine whether your rate is different than what is. How much does ups charge for box.

For some of these applications, we'll be using the Got library for making HTTP requests, so install that with this command in the same directory:

Let's try finding all of the links to unique MIDI files on this web page from the Video Game Music Archive with a bunch of Nintendo music as the example problem we want to solve for each of these libraries.

Tips and tricks for web scraping

Before moving onto specific tools, there are some common themes that are going to be useful no matter which method you decide to use.

Before writing code to parse the content you want, you typically will need to take a look at the HTML that’s rendered by the browser. Every web page is different, and sometimes getting the right data out of them requires a bit of creativity, pattern recognition, and experimentation.

There are helpful developer tools available to you in most modern browsers. If you right-click on the element you're interested in, you can inspect the HTML behind that element to get more insight.

You will also frequently need to filter for specific content. This is often done using CSS selectors, which you will see throughout the code examples in this tutorial, to gather HTML elements that fit a specific criteria. Regular expressions are also very useful in many web scraping situations. On top of that, if you need a little more granularity, you can write functions to filter through the content of elements, such as this one for determining whether a hyperlink tag refers to a MIDI file:

It is also good to keep in mind that many websites prevent web scraping in their Terms of Service, so always remember to double check this beforehand. With that, let's dive into the specifics!

jsdom

jsdom is a pure-JavaScript implementation of many web standards for Node.js, and is a great tool for testing and scraping web applications. Install it in your terminal using the following command:

The following code is all you need to gather all of the links to MIDI files on the Video Game Music Archive page referenced earlier:

This uses a very simple query selector,

a, to access all hyperlinks on the page, along with a few functions to filter through this content to make sure we're only getting the MIDI files we want. The noParens() filter function uses a regular expression to leave out all of the MIDI files that contain parentheses, which means they are just alternate versions of the same song.Save that code to a file named

index.js, and run it with the command node index.js in your terminal.If you want a more in-depth walkthrough on this library, check out this other tutorial I wrote on using jsdom.

Cheerio

Cheerio is a library that is similar to jsdom but was designed to be more lightweight, making it much faster. It implements a subset of core jQuery, providing an API that many JavaScript developers are familiar with.

Install it with the following command:

The code we need to accomplish this same task is very similar:

Here you can see that using functions to filter through content is built into Cheerio’s API, so we don't need any extra code for converting the collection of elements to an array. Replace the code in

index.js with this new code, and run it again. The execution should be noticeably quicker because Cheerio is a less bulky library.If you want a more in-depth walkthrough, check out this other tutorial I wrote on using Cheerio.

Puppeteer

Puppeteer is much different than the previous two in that it is primarily a library for headless browser scripting. Puppeteer provides a high-level API to control Chrome or Chromium over the DevTools protocol. It’s much more versatile because you can write code to interact with and manipulate web applications rather than just reading static data.

Install it with the following command:

Web scraping with Puppeteer is much different than the previous two tools because rather than writing code to grab raw HTML from a URL and then feeding it to an object, you're writing code that is going to run in the context of a browser processing the HTML of a given URL and building a real document object model out of it.

The following code snippet instructs Puppeteer's browser to go to the URL we want and access all of the same hyperlink elements that we parsed for previously:

Notice that we are still writing some logic to filter through the links on the page, but instead of declaring more filter functions, we're just doing it inline. There is some boilerplate code involved for telling the browser what to do, but we don't have to use another Node module for making a request to the website we're trying to scrape. Overall it's a lot slower if you're doing simple things like this, but Puppeteer is very useful if you are dealing with pages that aren't static.

For a more thorough guide on how to use more of Puppeteer's features to interact with dynamic web applications, I wrote another tutorial that goes deeper into working with Puppeteer.

Playwright

Playwright is another library for headless browser scripting, written by the same team that built Puppeteer. It's API and functionality are nearly identical to Puppeteer's, but it was designed to be cross-browser and works with FireFox and Webkit as well as Chrome/Chromium.

Install it with the following command:

The code for doing this task using Playwright is largely the same, with the exception that we need to explicitly declare which browser we're using:

This code should do the same thing as the code in the Puppeteer section and should behave similarly. The advantage to using Playwright is that it is more versatile as it works with more than just one type of browser. Try running this code using the other browsers and seeing how it affects the behavior of your script.

Like the other libraries, I also wrote another tutorial that goes deeper into working with Playwright if you want a longer walkthrough.

The vast expanse of the World Wide Web

Web Scrape Tool

Now that you can programmatically grab things from web pages, you have access to a huge source of data for whatever your projects need. One thing to keep in mind is that changes to a web page’s HTML might break your code, so make sure to keep everything up to date if you're building applications that rely on scraping.

Web Scraping Tools Open Source

I’m looking forward to seeing what you build. Feel free to reach out and share your experiences or ask any questions.

Web Scrape Tools

- Email: [email protected]

- Twitter: @Sagnewshreds

- Github: Sagnew

- Twitch (streaming live code): Sagnewshreds